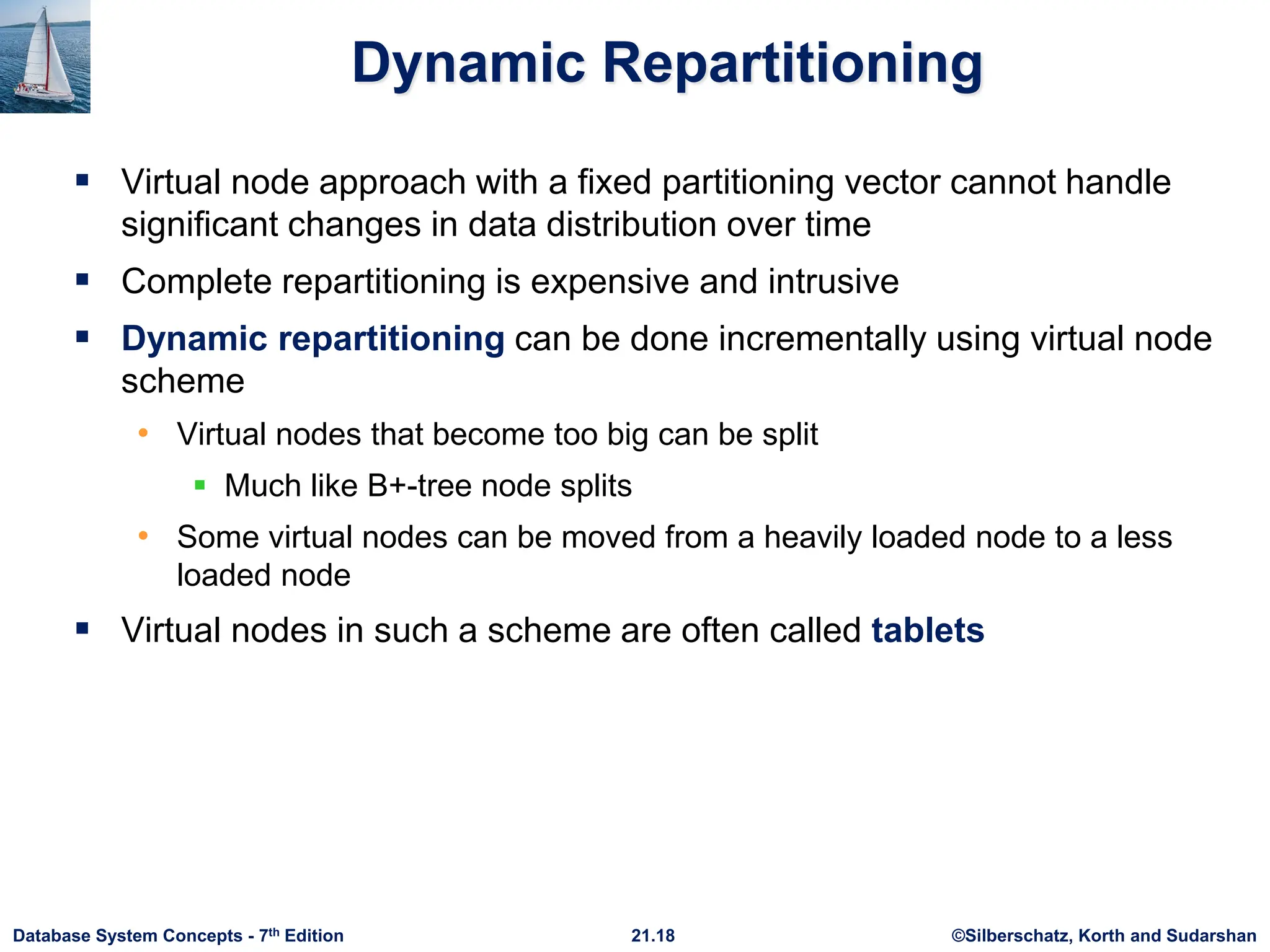

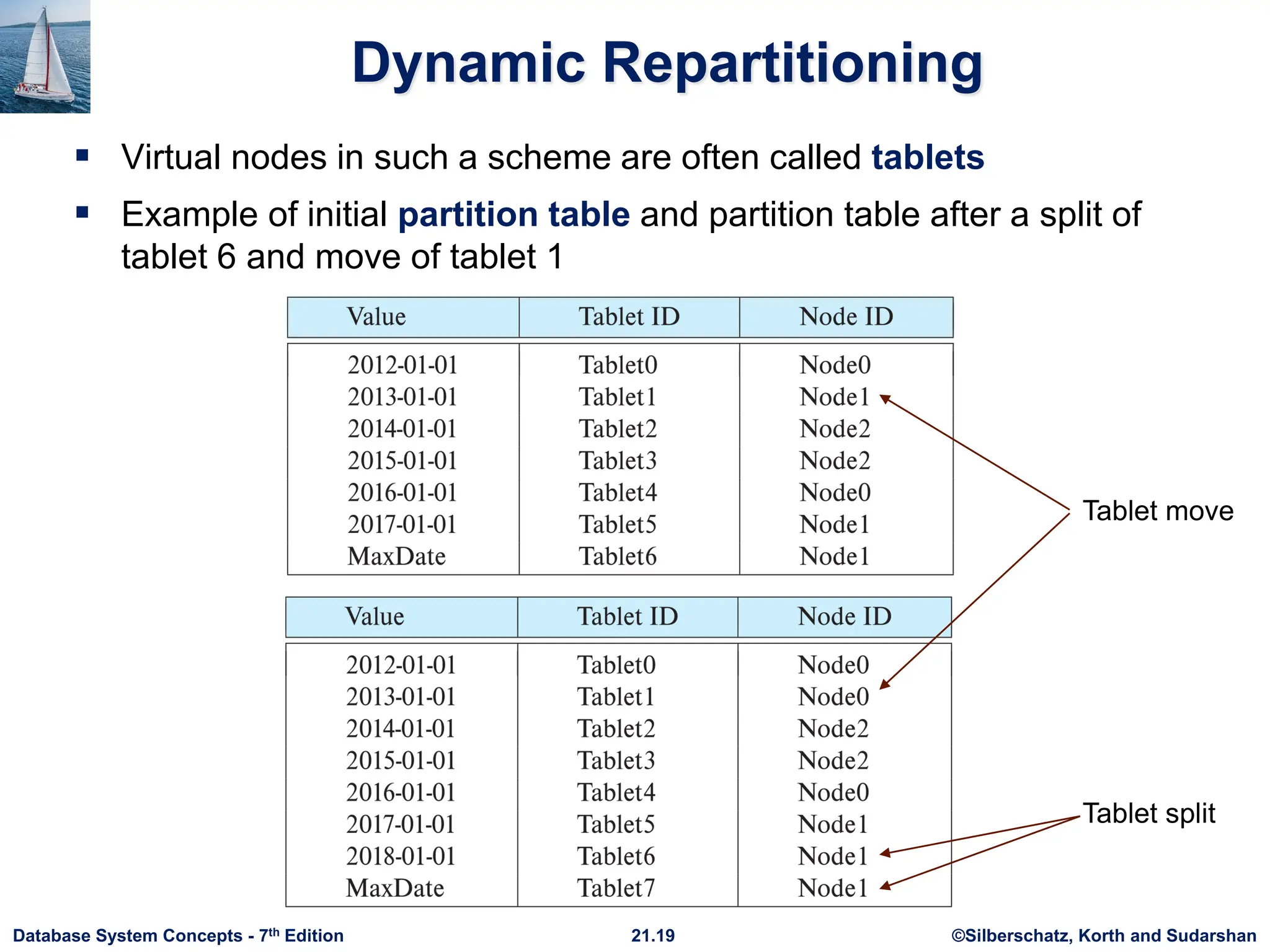

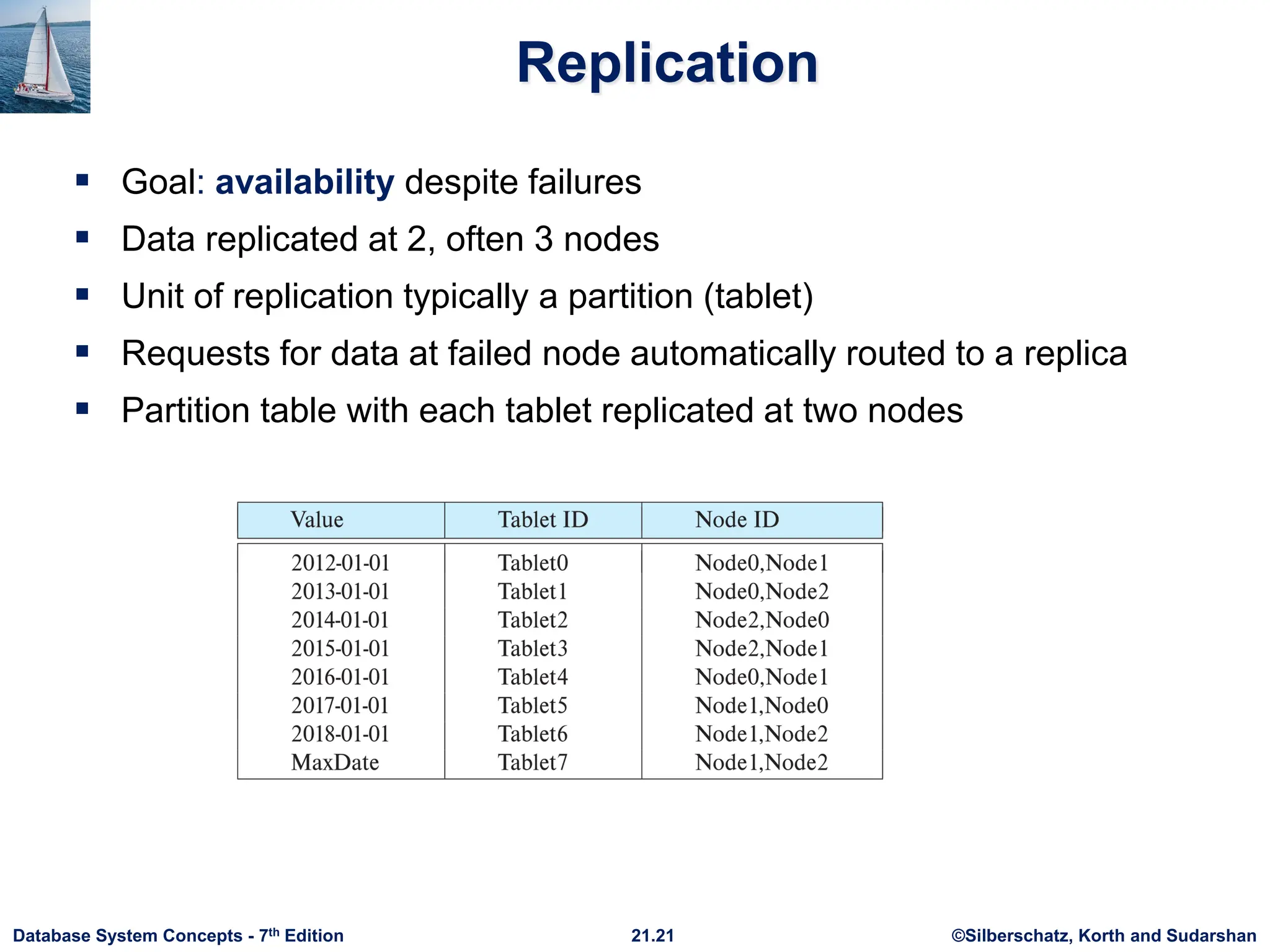

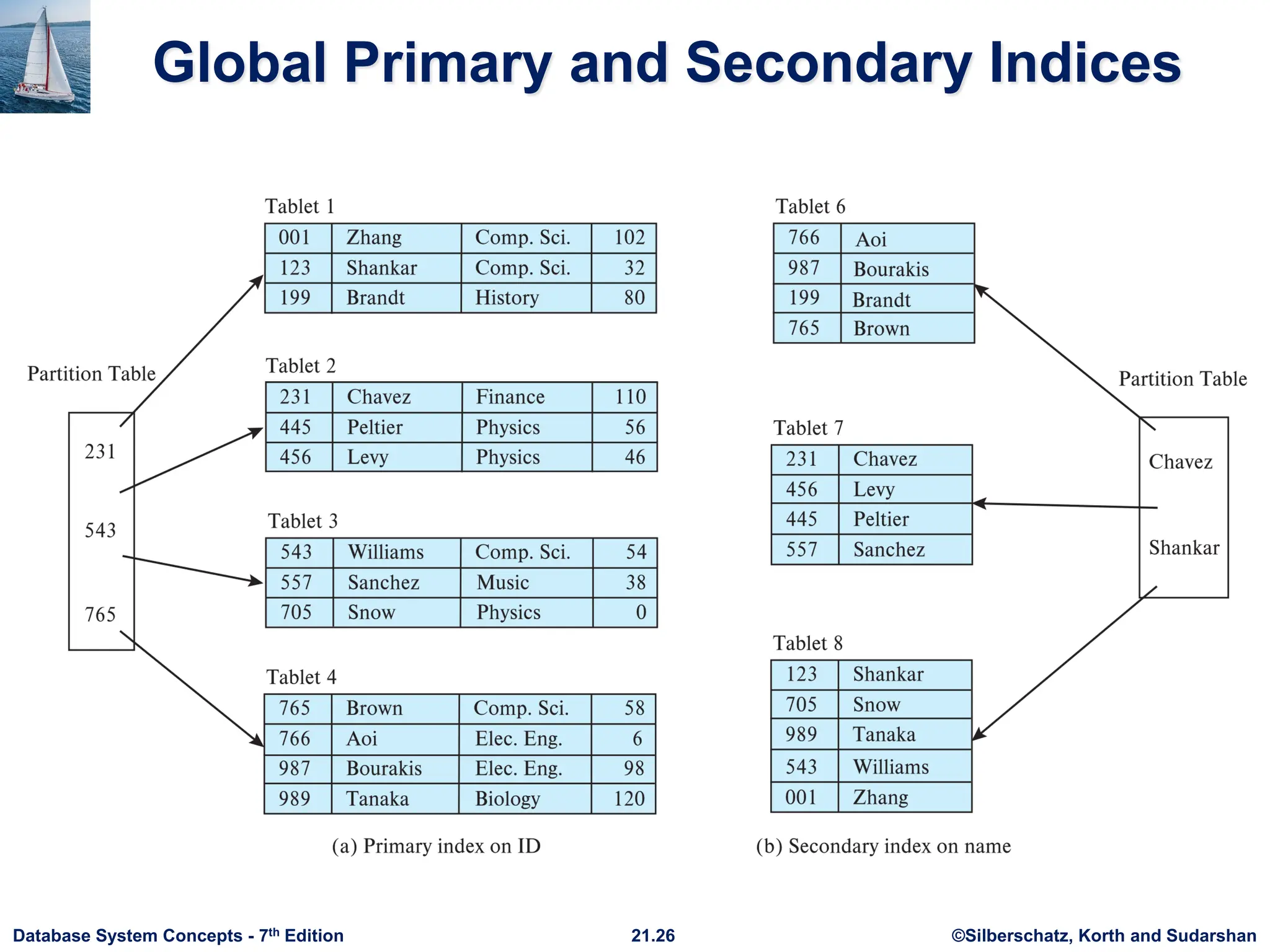

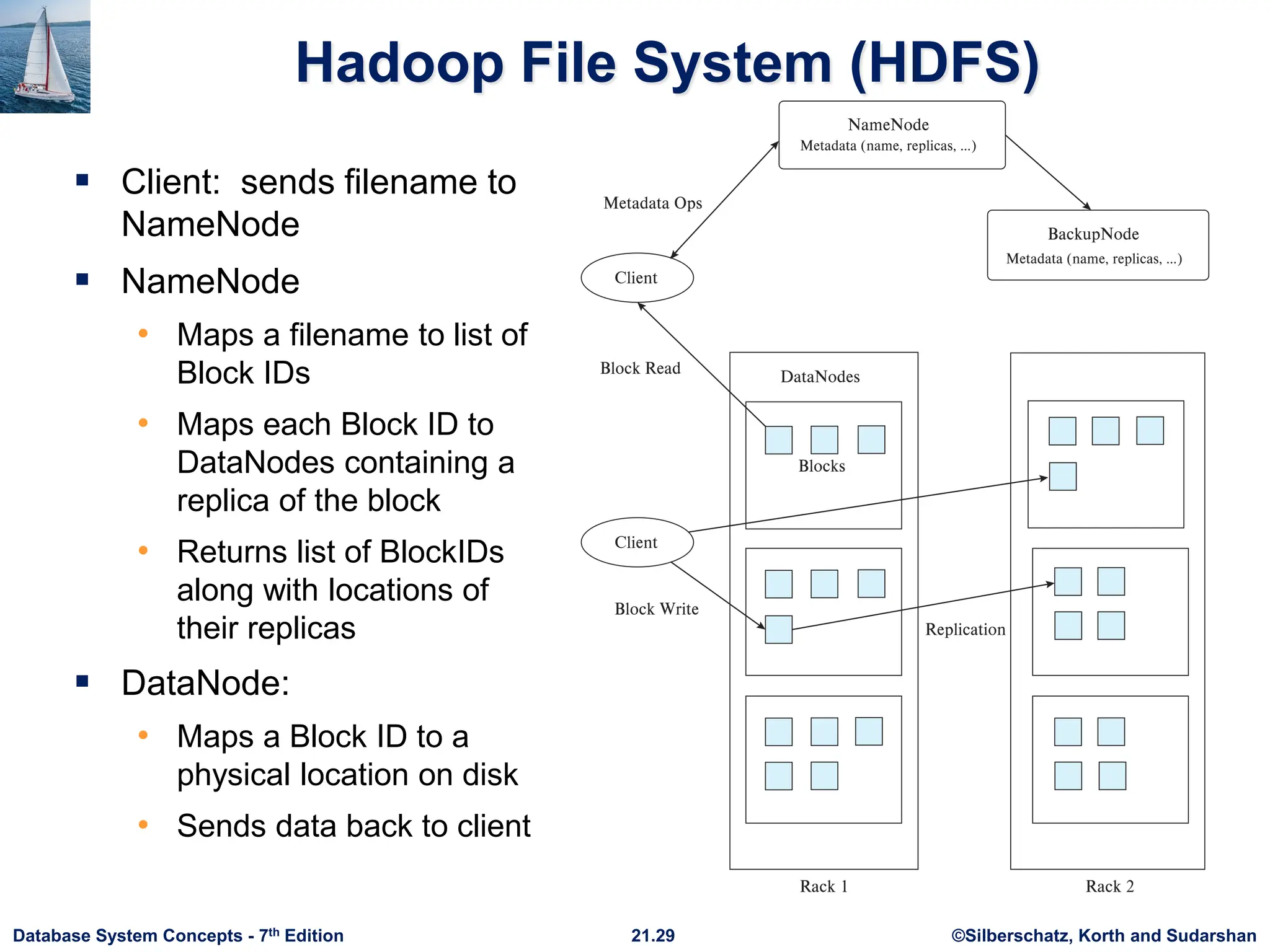

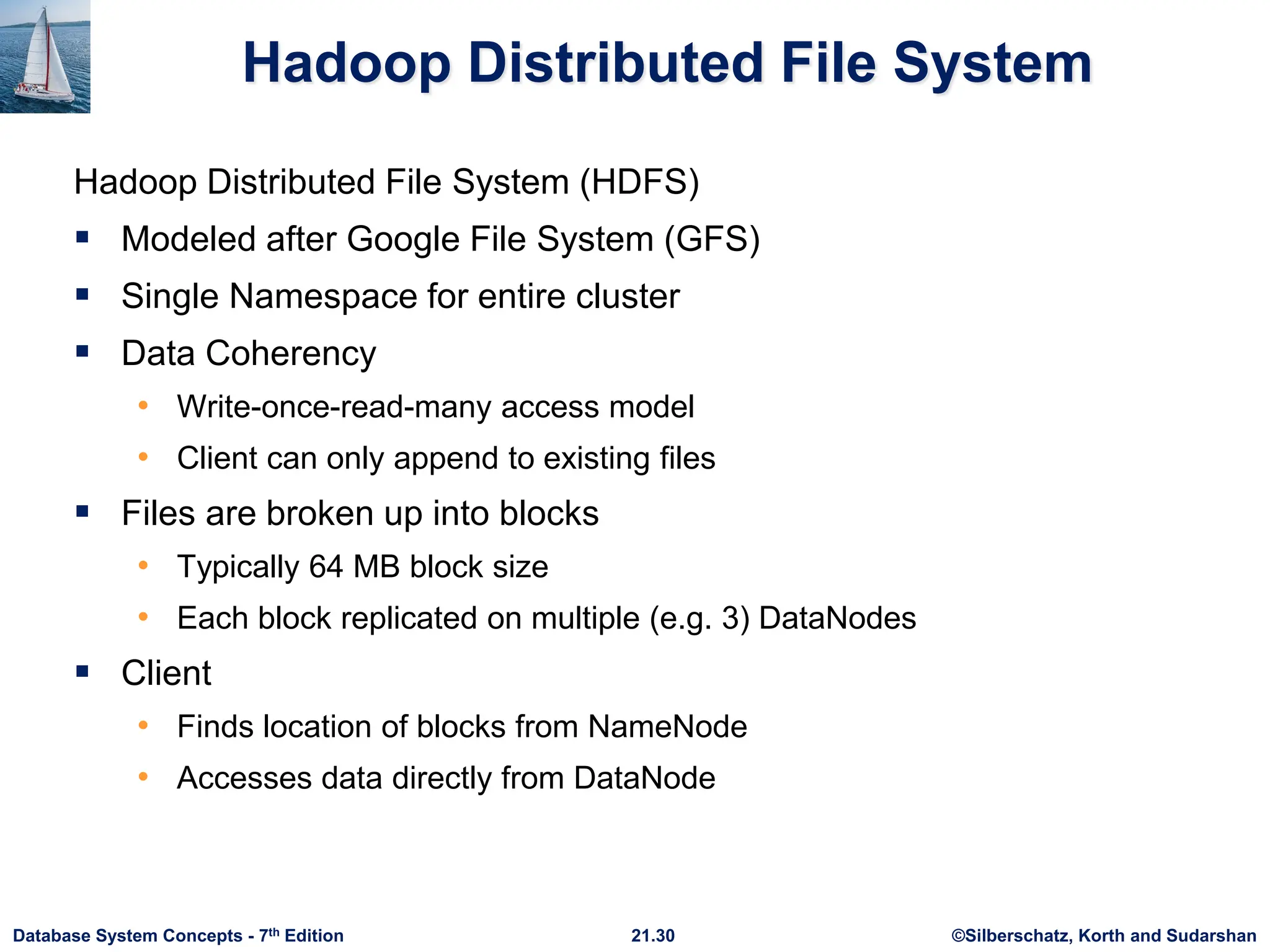

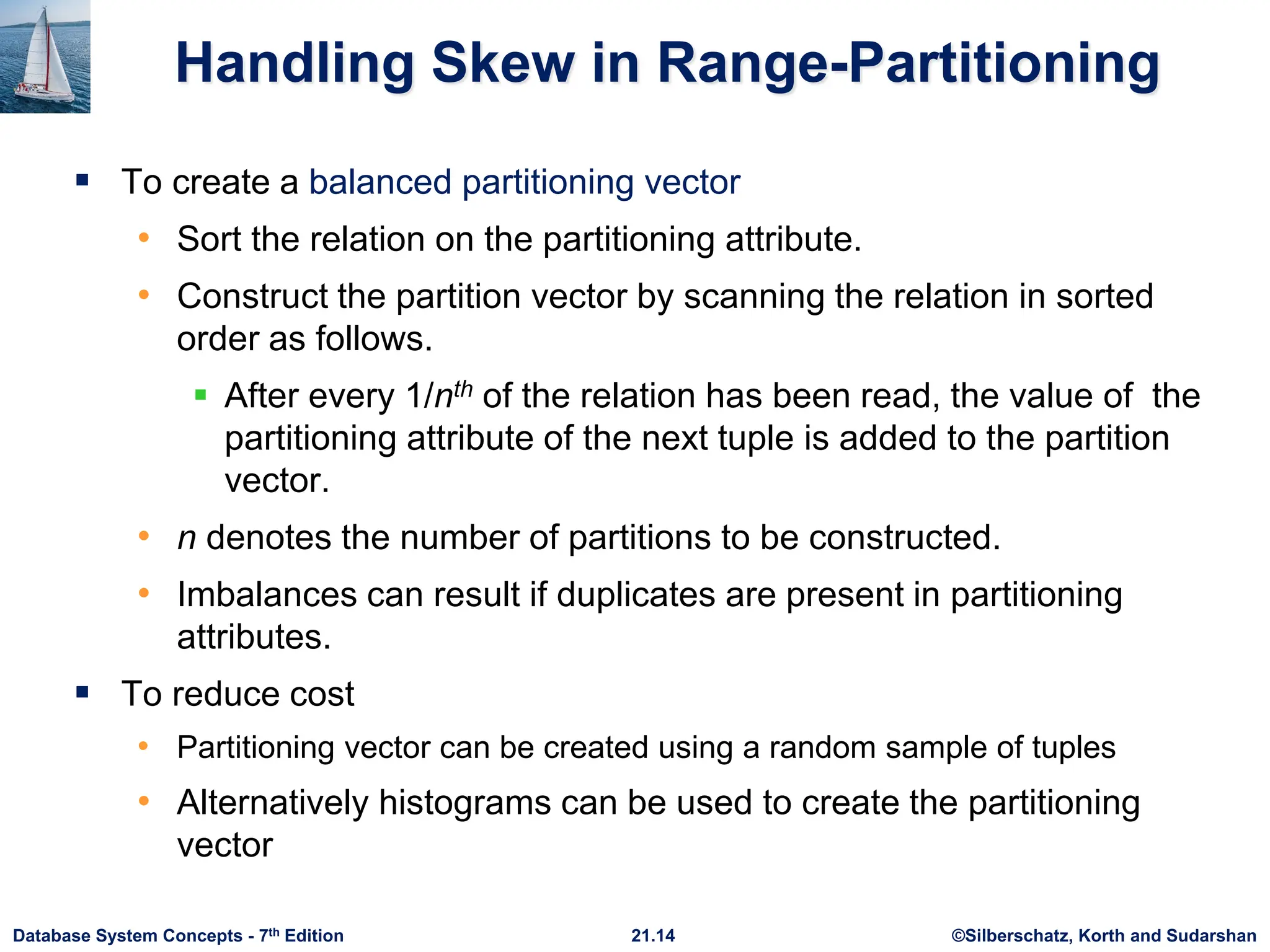

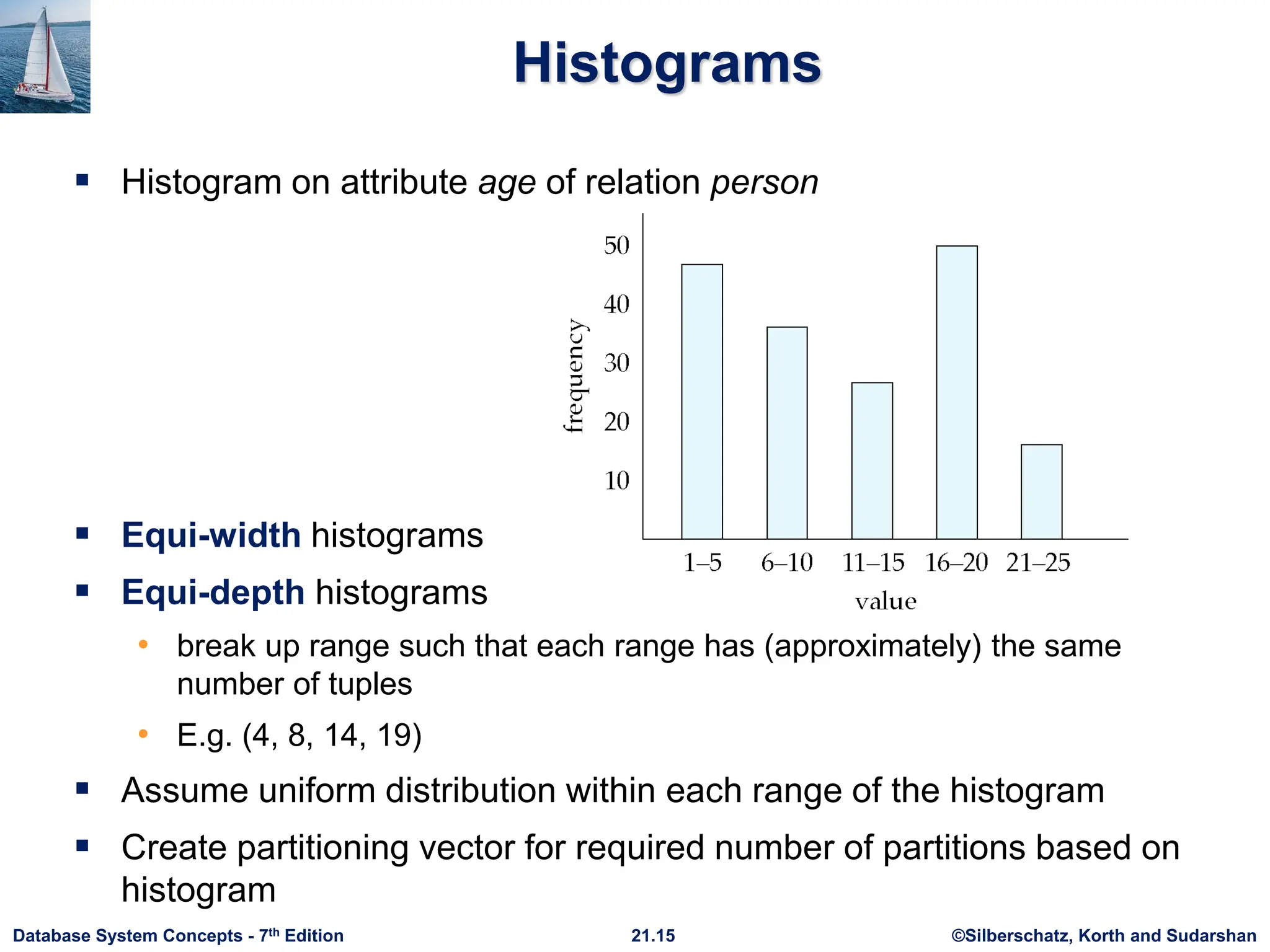

Chapter 21 of 'Database System Concepts' discusses parallel and distributed storage systems, highlighting the growing data storage needs and technological advancements in parallel machines. It covers various partitioning techniques, addressing challenges like data skew, and methods for handling data distribution and execution skew, including virtual node partitioning and dynamic repartitioning. The chapter also examines replication strategies for availability, the architecture of distributed file systems like HDFS, sharding techniques, and key-value storage systems.

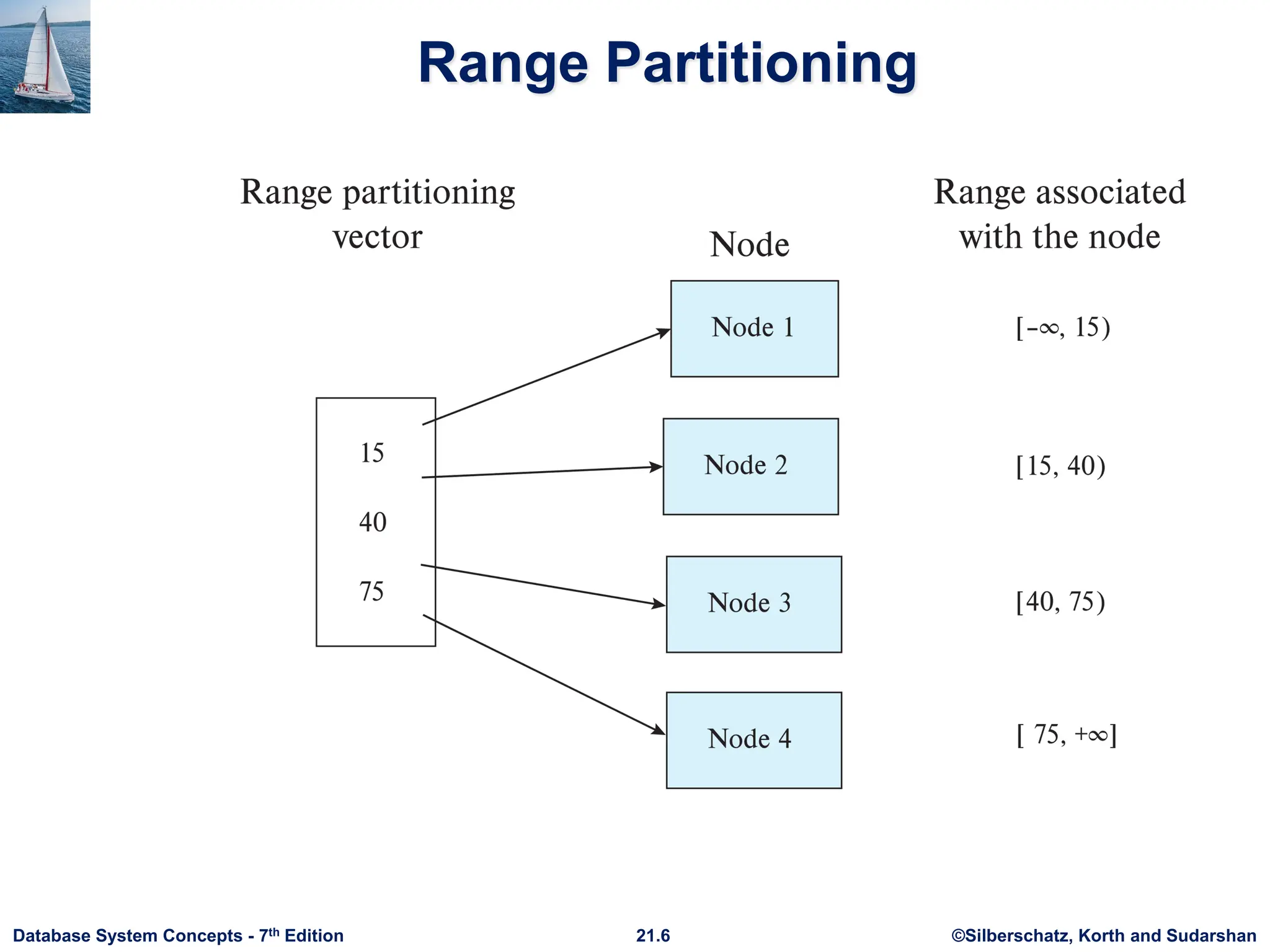

![©Silberschatz, Korth and Sudarshan 21.7 Database System Concepts - 7th Edition I/O Parallelism (Cont.) Partitioning techniques (cont.): Range partitioning: • Choose an attribute as the partitioning attribute. • A partitioning vector [vo, v1, ..., vn-2] is chosen. • Let v be the partitioning attribute value of a tuple. Tuples such that vi ≤ vi+1 go to node I + 1. Tuples with v < v0 go to node 0 and tuples with v ≥ vn-2 go to node n-1. E.g., with a partitioning vector [5,11] a tuple with partitioning attribute value of 2 will go to node 0, a tuple with value 8 will go to node 1, while a tuple with value 20 will go to node2.](https://image.slidesharecdn.com/u1distibuteddbstorage-241128032732-e40aa1c7/75/Parallel-and-distributed-storage-on-databases-7-2048.jpg)

![©Silberschatz, Korth and Sudarshan 21.16 Database System Concepts - 7th Edition Virtual Node Partitioning Key idea: pretend there are several times (10x to 20x) as many virtual nodes as real nodes • Virtual nodes are mapped to real nodes • Tuples partitioned across virtual nodes using range-partitioning vector Hash partitioning is also possible Mapping of virtual nodes to real nodes • Round-robin: virtual node i mapped to real node (i mod n)+1 • Mapping table: mapping table virtual_to_real_map[] tracks which virtual node is on which real node Allows skew to be handled by moving virtual nodes from more loaded nodes to less loaded nodes Both data distribution skew and execution skew can be handled](https://image.slidesharecdn.com/u1distibuteddbstorage-241128032732-e40aa1c7/75/Parallel-and-distributed-storage-on-databases-16-2048.jpg)