Let’s talk about LLM guardrails

Last Updated on October 13, 2025 by Editorial Team

Author(s): Adnan Siddiqi

Originally published on Towards AI.

Let’s talk about LLM guardrails

In March, I received an email from the founder of a website who had created an AI wrapper related to the medical field. He sent me an unsolicited email and subscribed me to his newsletter.

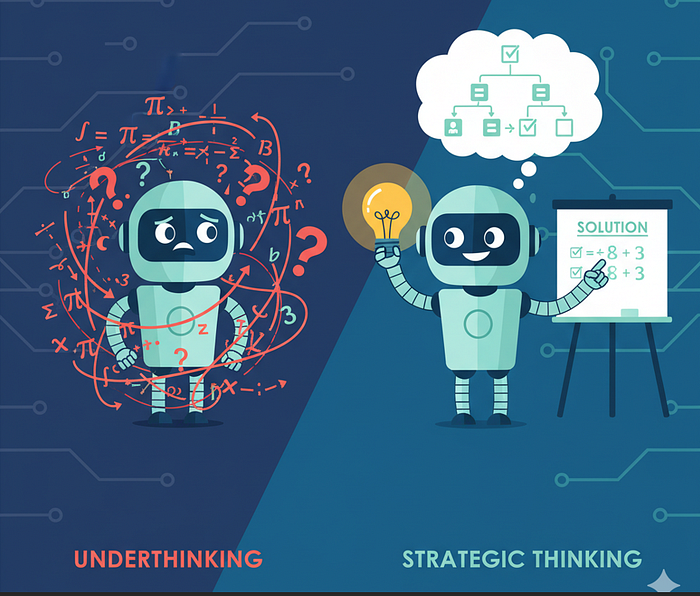

The article discusses the importance of implementing guardrails in AI models, particularly LLMs, to prevent exploitation and misuse. Through various examples, the author illustrates how inadequate setups can lead to significant issues, including unwarranted financial loss and technical challenges. The necessity of establishing firm guidelines and regulations while utilizing LLMs is emphasized as a fundamental step toward practical and responsible usage, particularly for businesses and developers entering the AI space.

Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Take our 90+ lesson From Beginner to Advanced LLM Developer Certification: From choosing a project to deploying a working product this is the most comprehensive and practical LLM course out there!

Towards AI has published Building LLMs for Production—our 470+ page guide to mastering LLMs with practical projects and expert insights!

Discover Your Dream AI Career at Towards AI Jobs

Towards AI has built a jobs board tailored specifically to Machine Learning and Data Science Jobs and Skills. Our software searches for live AI jobs each hour, labels and categorises them and makes them easily searchable. Explore over 40,000 live jobs today with Towards AI Jobs!

Note: Content contains the views of the contributing authors and not Towards AI.