View on TensorFlow.org View on TensorFlow.org |  Run in Google Colab Run in Google Colab |  View source on GitHub View source on GitHub |  Download notebook Download notebook |

TensorBoard is a built-in tool for providing measurements and visualizations in TensorFlow. Common machine learning experiment metrics, such as accuracy and loss, can be tracked and displayed in TensorBoard. TensorBoard is compatible with TensorFlow 1 and 2 code.

In TensorFlow 1, tf.estimator.Estimator saves summaries for TensorBoard by default. In comparison, in TensorFlow 2, summaries can be saved using a tf.keras.callbacks.TensorBoard callback.

This guide demonstrates how to use TensorBoard, first, in TensorFlow 1 with Estimators, and then, how to carry out the equivalent process in TensorFlow 2.

Setup

import tensorflow.compat.v1 as tf1 import tensorflow as tf import tempfile import numpy as np import datetime %load_ext tensorboard mnist = tf.keras.datasets.mnist # The MNIST dataset. (x_train, y_train),(x_test, y_test) = mnist.load_data() x_train, x_test = x_train / 255.0, x_test / 255.0 TensorFlow 1: TensorBoard with tf.estimator

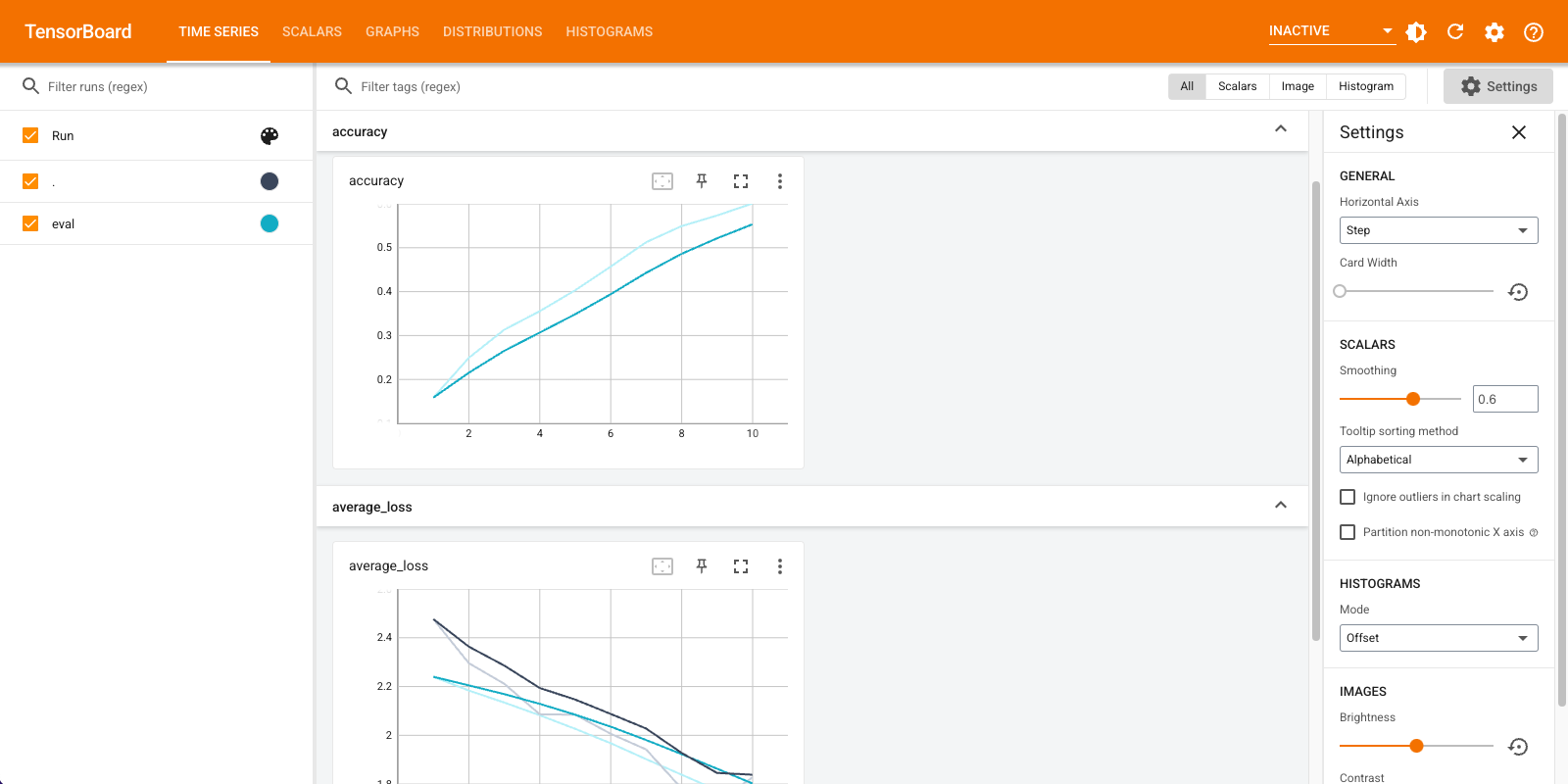

In this TensorFlow 1 example, you instantiate a tf.estimator.DNNClassifier, train and evaluate it on the MNIST dataset, and use TensorBoard to display the metrics:

%reload_ext tensorboard feature_columns = [tf1.feature_column.numeric_column("x", shape=[28, 28])] config = tf1.estimator.RunConfig(save_summary_steps=1, save_checkpoints_steps=1) path = tempfile.mkdtemp() classifier = tf1.estimator.DNNClassifier( feature_columns=feature_columns, hidden_units=[256, 32], optimizer=tf1.train.AdamOptimizer(0.001), n_classes=10, dropout=0.1, model_dir=path, config = config ) train_input_fn = tf1.estimator.inputs.numpy_input_fn( x={"x": x_train}, y=y_train.astype(np.int32), num_epochs=10, batch_size=50, shuffle=True, ) test_input_fn = tf1.estimator.inputs.numpy_input_fn( x={"x": x_test}, y=y_test.astype(np.int32), num_epochs=10, shuffle=False ) train_spec = tf1.estimator.TrainSpec(input_fn=train_input_fn, max_steps=10) eval_spec = tf1.estimator.EvalSpec(input_fn=test_input_fn, steps=10, throttle_secs=0) tf1.estimator.train_and_evaluate(estimator=classifier, train_spec=train_spec, eval_spec=eval_spec) %tensorboard --logdir {classifier.model_dir}

TensorFlow 2: TensorBoard with a Keras callback and Model.fit

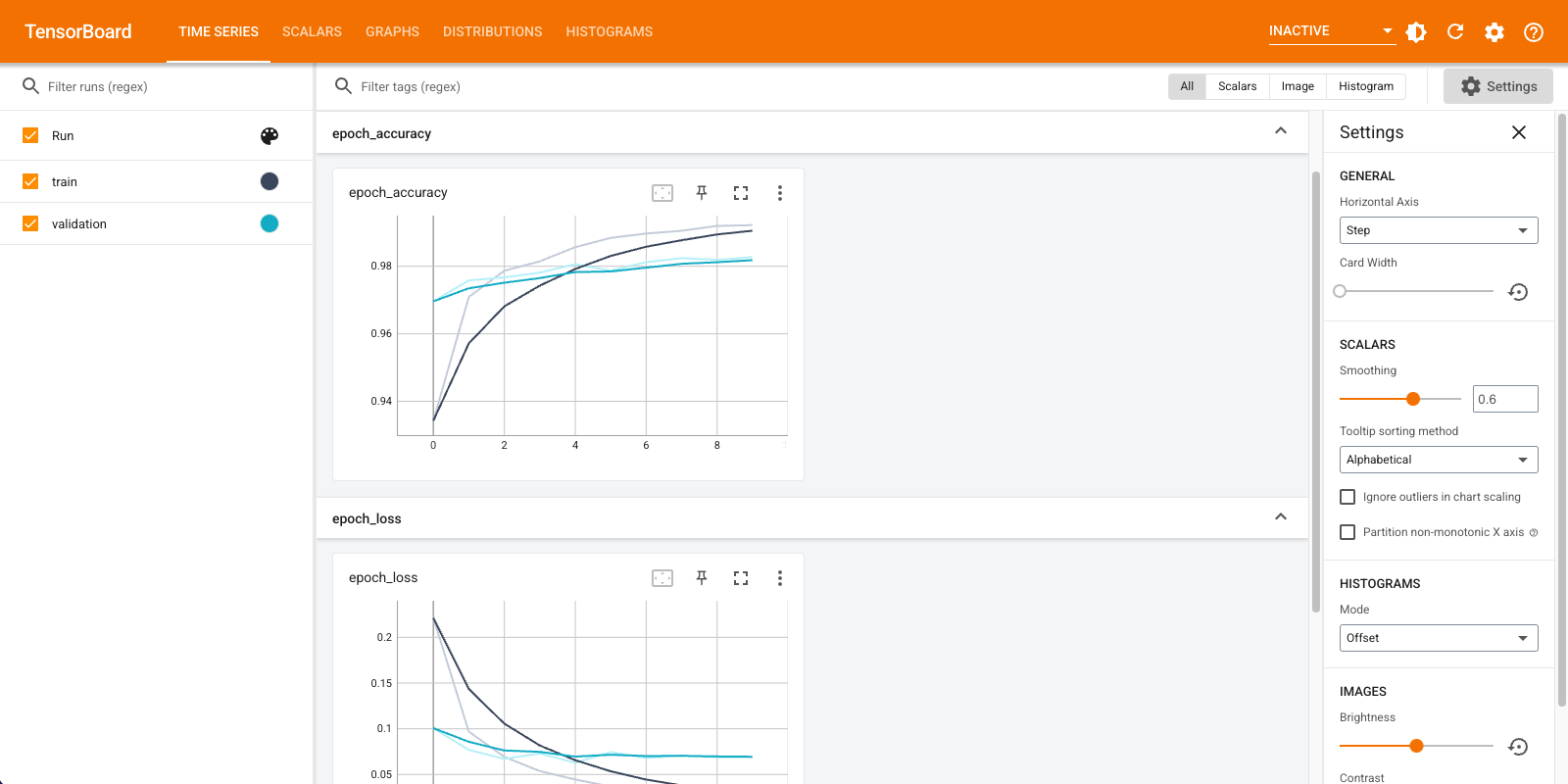

In this TensorFlow 2 example, you create and store logs with the tf.keras.callbacks.TensorBoard callback, and train the model. The callback tracks the accuracy and loss per epoch. It is passed to Model.fit in the callbacks list.

%reload_ext tensorboard def create_model(): return tf.keras.models.Sequential([ tf.keras.layers.Flatten(input_shape=(28, 28), name='layers_flatten'), tf.keras.layers.Dense(512, activation='relu', name='layers_dense'), tf.keras.layers.Dropout(0.2, name='layers_dropout'), tf.keras.layers.Dense(10, activation='softmax', name='layers_dense_2') ]) model = create_model() model.compile(optimizer='adam', loss='sparse_categorical_crossentropy', metrics=['accuracy'], steps_per_execution=10) log_dir = tempfile.mkdtemp() tensorboard_callback = tf.keras.callbacks.TensorBoard( log_dir=log_dir, histogram_freq=1) # Enable histogram computation with each epoch. model.fit(x=x_train, y=y_train, epochs=10, validation_data=(x_test, y_test), callbacks=[tensorboard_callback]) %tensorboard --logdir {tensorboard_callback.log_dir}

Next steps

- Learn more about TensorBoard in the Get started guide.

- For lower level APIs, refer to the tf.summary migration to TensorFlow 2 guide.